The European project MERGING (Manipulation Enhancement through Robotic Guidance and Intelligent Novel Grippers), that launched in November 2019, aims to design a versatile, low cost and easy-to-use robotic solution that manufacturers can apply to support or automate tasks involving the handling of flexible or fragile objects. It will consist of a new robotic dexterous gripper taking advantage of an integrated adaptive electro-adhesive skin.

This skin will induce electrostatic attraction between the gripping surface and the object, thanks to an electric field produced by skin-enclosed electrodes. The skin will also have the ability to conform to the objects to handle to raise the contact surface. Thanks to this skin, the new gripper will show enhanced gripping performances, while reducing the gripping forces, thus avoiding damaging the soft objects. The control of the complete robotic system will include, firstly, perception and supervision functions to adapt the system’s response to the execution conditions and to high variability of the flexible object’s behaviour; secondly, control abilities to make the human-robot or multi-robot co-manipulation of the flexible object safer, using Artificial Intelligence and Machine Learning. Thus, the robot will be able to learn how to handle soft objects without damaging them, as well as how to safely work side by side with humans.

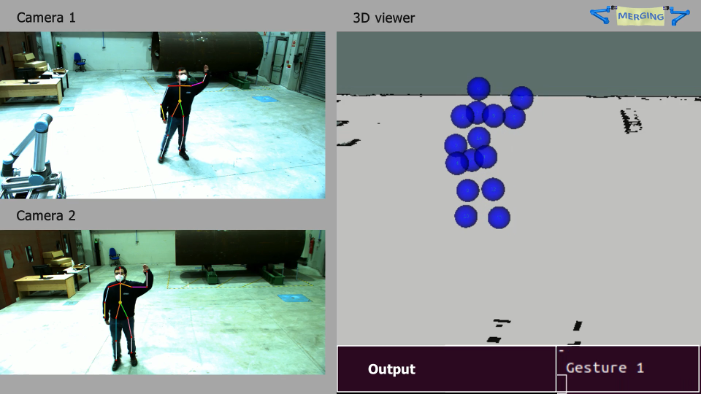

In the context of this project, AIMEN has developed the perception systems for the robotic cell, considering both perception for human robot interaction and perception for grasping and manipulation. The perception system for human robot interaction is based on stereo vision and deep learning techniques. It is in charge of the detection and tracking of operators in the shared workspace as well as of their movement decomposition. This movement decomposition allows the system to be aware of the position of the head and the arms of the operator and this information is used as a base by the gesture recognition system.

On the other hand, regarding the perception for enabling the cognitive grasping and manipulation of fabrics, AIMEN is advancing on the fabric recognition algorithms and on the motion planning, especially for removing wrinkles, while EPFL have been working on the feedback from soft force sensor, to be mounted later at the fingertip of the enhanced gripper, in order to improve the object handling monitoring.

The perception system uses 2D and 3D information to detect the fabric and its wrinkles and then, deep learning techniques are used to get the best strategy of manipulation to remove wrinkles, that is, to know the best grasping points and the best movements to do. This manipulation task will be done taking into account the information of this perception module and will be modified online with the feedback of the fingertip soft force sensors.

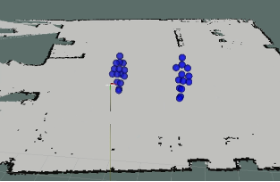

Moreover, AIMEN is working on the monitoring of a ply while an operator is manipulating it. For this, a 3D sensor is used and the results achieved for a white ply were successful.

In the video, it can be seen how the point clouds received from the 3D sensor in free run mode are processed in real time.